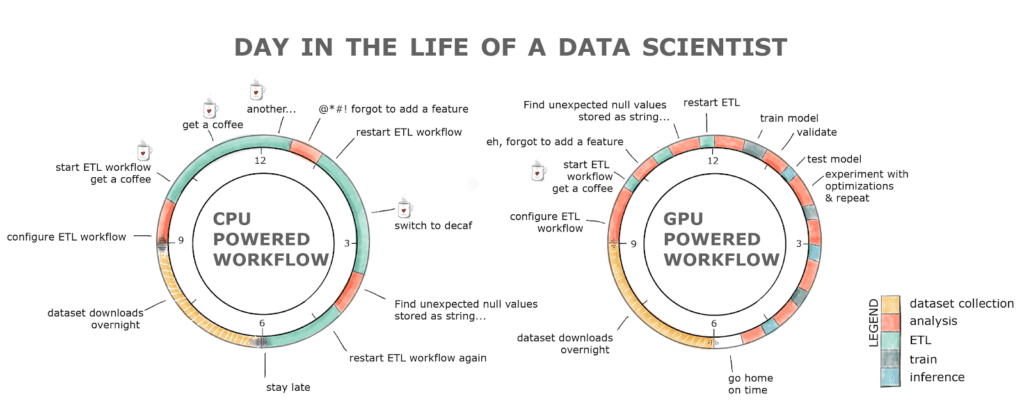

Python, Performance, and GPUs. A status update for using GPU… | by Matthew Rocklin | Towards Data Science

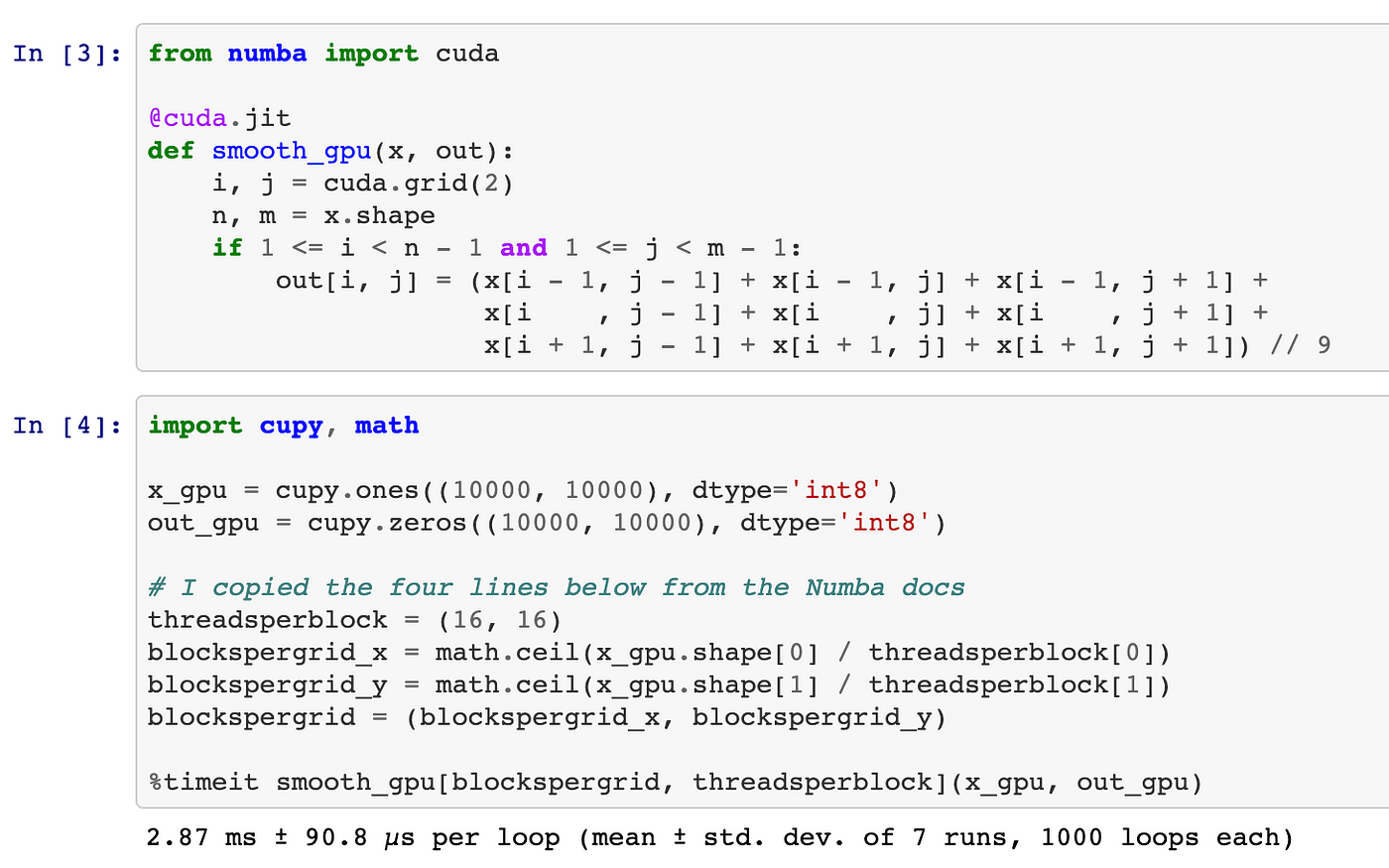

GitHub - meghshukla/CUDA-Python-GPU-Acceleration-MaximumLikelihood-RelaxationLabelling: GUI implementation with CUDA kernels and Numba to facilitate parallel execution of Maximum Likelihood and Relaxation Labelling algorithms in Python 3

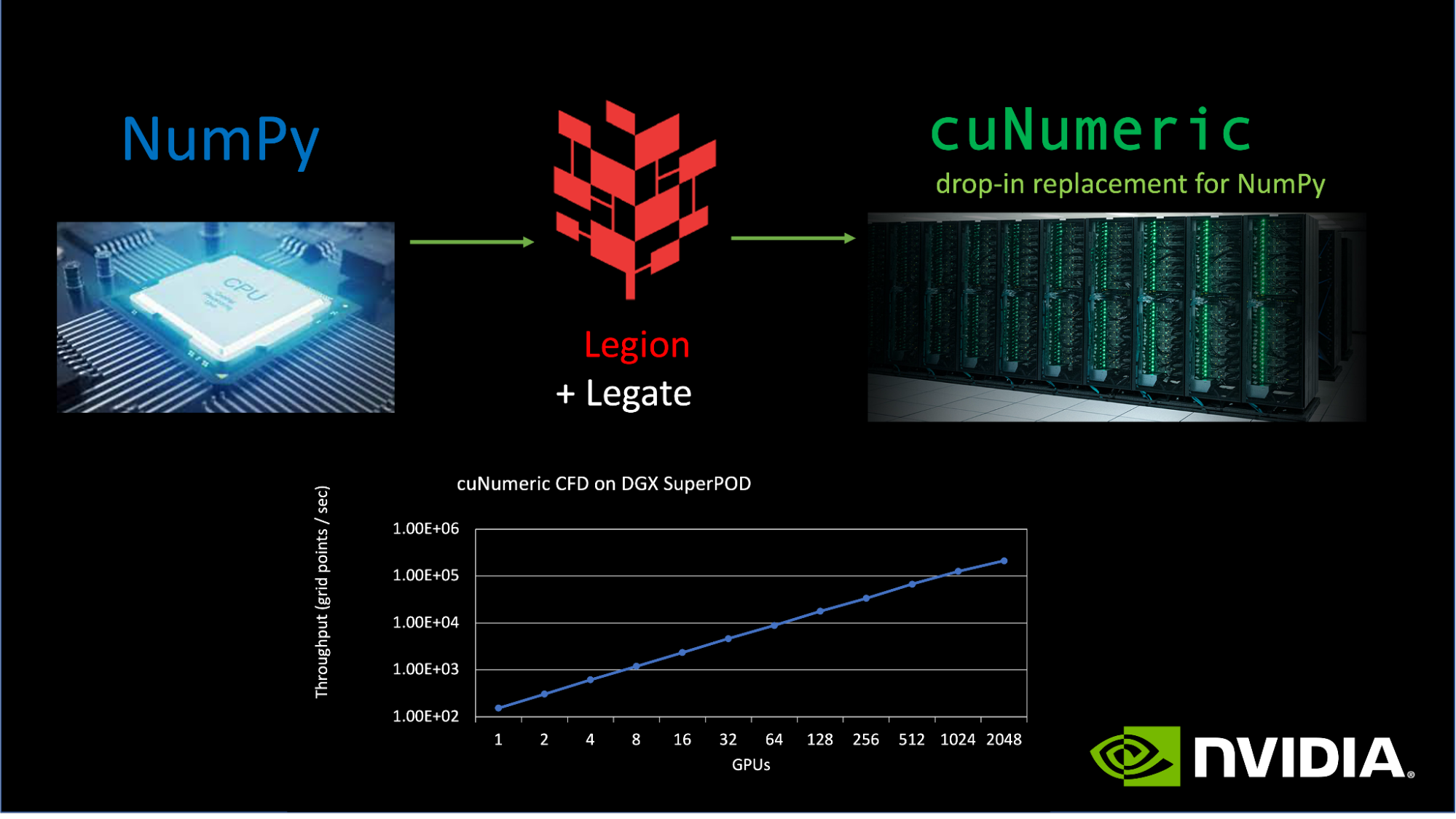

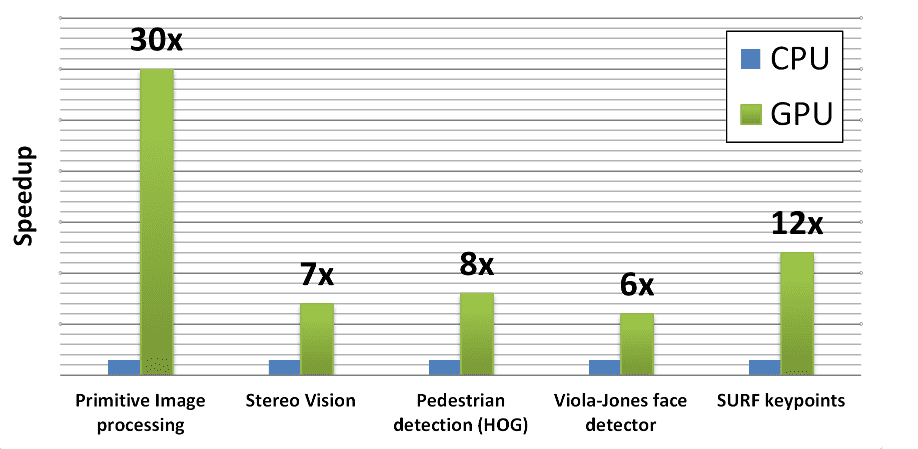

NVIDIA's Answer: Bringing GPUs to More Than CNNs - Intel's Xeon Cascade Lake vs. NVIDIA Turing: An Analysis in AI

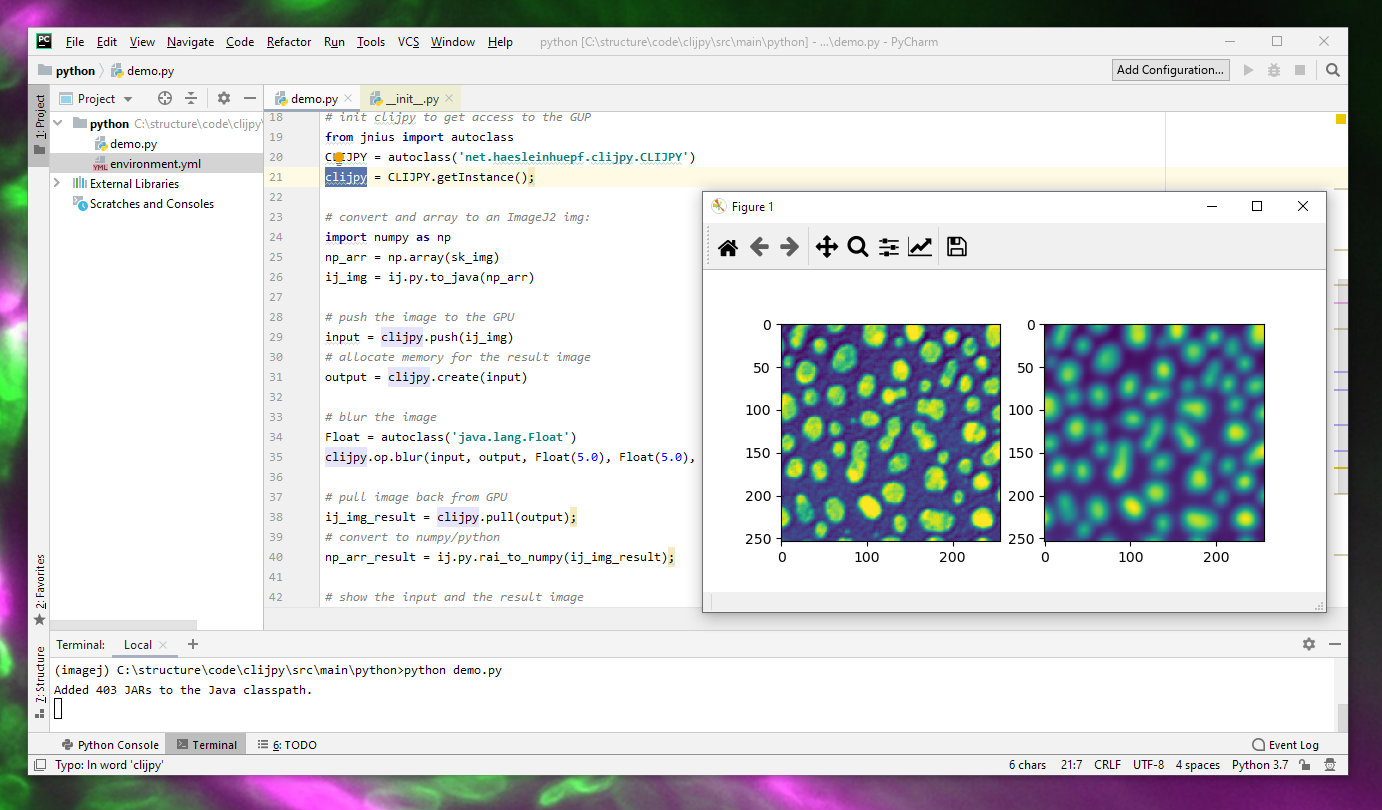

T-14: GPU-Acceleration of Signal Processing Workflows from Python: Part 1 | IEEE Signal Processing Society Resource Center

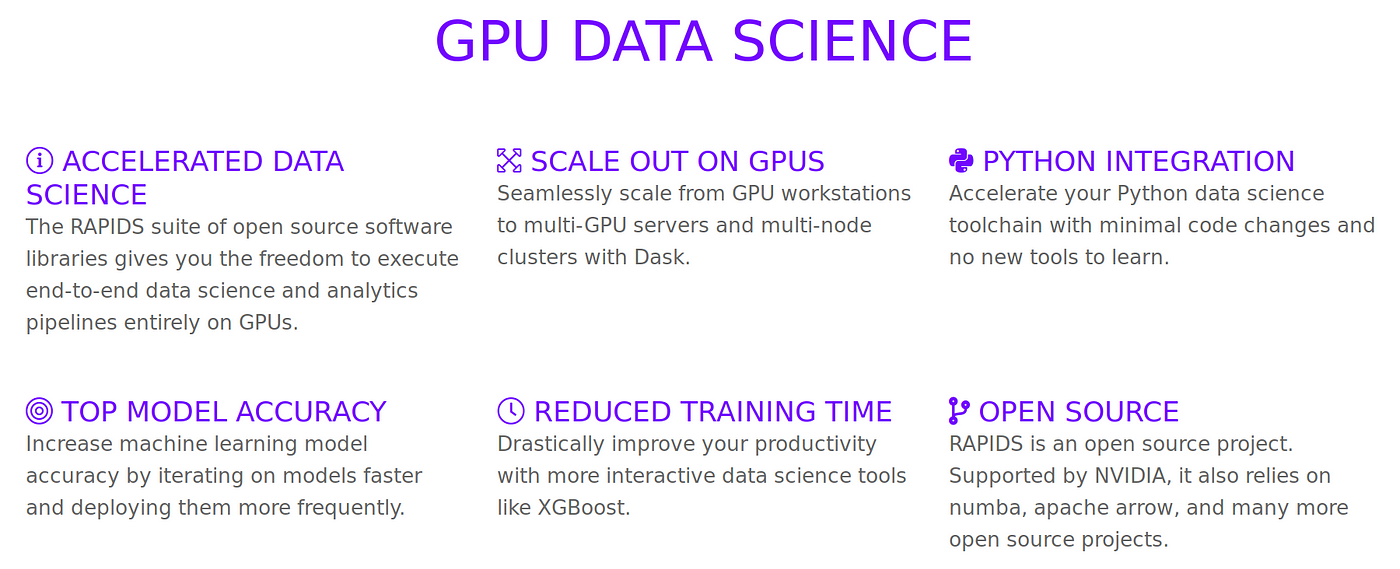

An Introduction to GPU Accelerated Machine Learning in Python - Data Science of the Day - NVIDIA Developer Forums

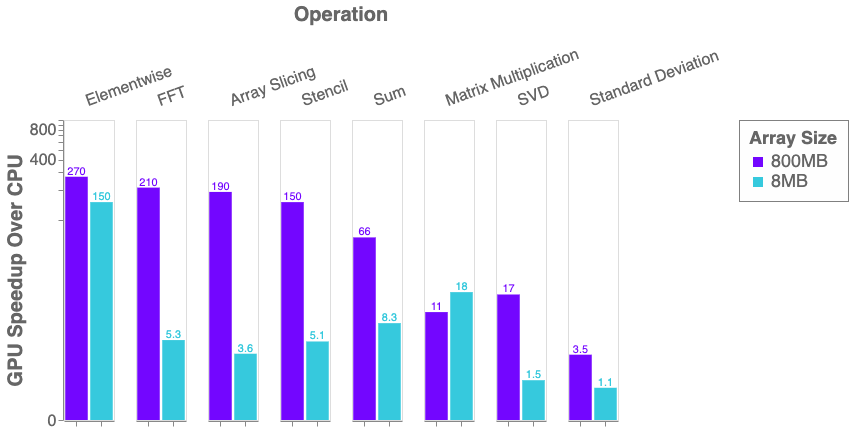

Python, Performance, and GPUs. A status update for using GPU… | by Matthew Rocklin | Towards Data Science

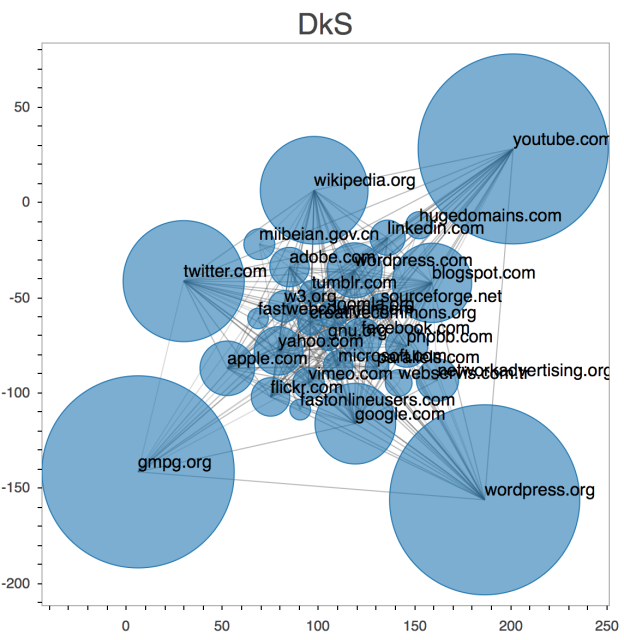

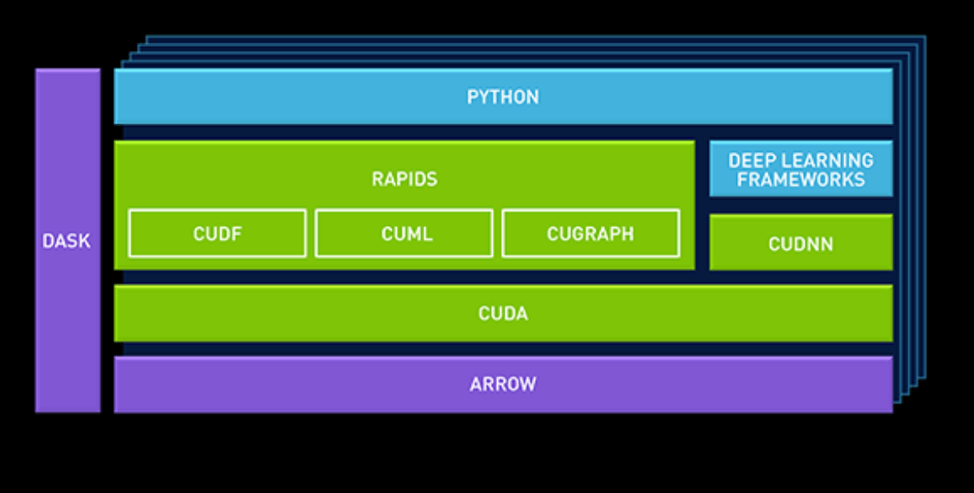

What is RAPIDS AI?. NVIDIA's new GPU acceleration of Data… | by Winston Robson | Future Vision | Medium